Command

Note

For most use cases we recommend our latest model Command R instead.

| Latest Model | Description | Max Tokens (Context Length) | Endpoints |

|---|---|---|---|

command | An instruction-following conversational model that performs language tasks with high quality, more reliably and with a longer context than our base generative models. | 4096 | Chat, Summarize |

command-light | A smaller, faster version of command. Almost as capable, but a lot faster. | 4096 | Chat, Summarize |

command-nightly | To reduce the time between major releases, we put out nightly versions of command models. For command, that is command-nightly.Be advised that command-nightly is the latest, most experimental, and (possibly) unstable version of its default counterpart. Nightly releases are updated regularly, without warning, and are not recommended for production use. | 128K | Chat |

command-light-nightly | To reduce the time between major releases, we put out nightly versions of command models. For command-light, that is command-light-nightly.Be advised that command-light-nightly is the latest, most experimental, and (possibly) unstable version of its default counterpart. Nightly releases are updated regularly, without warning, and are not recommended for production use. | 4096 | Chat |

The Command family of models responds well with instruction-like prompts, and are available in two variants: command-light and command. The command model demonstrates better performance, while command-light is a great option for applications that require fast responses.

To reduce the turnaround time for releases, we have nightly versions of Command available. This means that every week, you can expect the performance of command-nightly and command-light-nightly to improve.

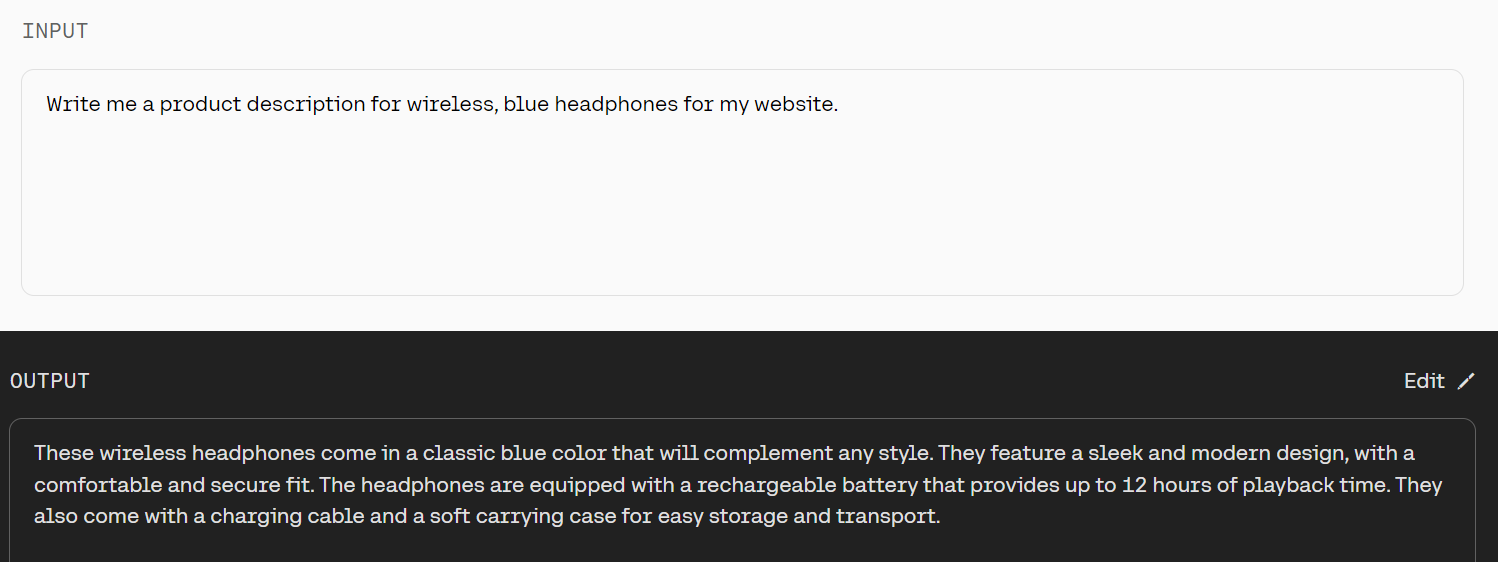

Example Prompts

Get Started

Set up

Install the SDK, if you haven't already.

pip install cohere

Then, set up the Cohere client.

import cohere

co = cohere.Client(api_key)

Create prompt

message = "Write an introductory paragraph for a blog post about language models."

Generate text

response = co.chat(

model='command',

message=message,

)

intro_paragraph = response.text

FAQ

Can users train Command?

Users cannot train Command in OS at this time. However, our team can handle this on a case-by-case basis. Please email [email protected] if you’re interested in training this model.

Where can I leave feedback about Cohere generative models?

Please leave feedback on Discord.

What's the context length on the command models?

A model's "context length" refers to the number of tokens it's capable of processing at one time. In the table above, you can find the context length (and a few other relevant parameters) for the different versions of the command models.

Updated 9 days ago